The release of the 1921 census returns for England and Wales earlier this year led to some (fairly heated) discussion on social media regarding the quality of the transcription provided by Findmypast, the National Archives’ commercial partners in the online launch. Findmypast even went as far as issuing a (partial) apology:

Due to the secure nature of the 1921 Census project, the period of time in which we have been able to access and review the data ahead of launch has been limited.

They went on to say that they had been “unable to conduct the same level of quality assurance checks we would normally apply”.

I wrote about the release (and the releases of previous decennial censuses) in an earlier blog post but one aspect that I didn’t touch on then was the question of transcription.

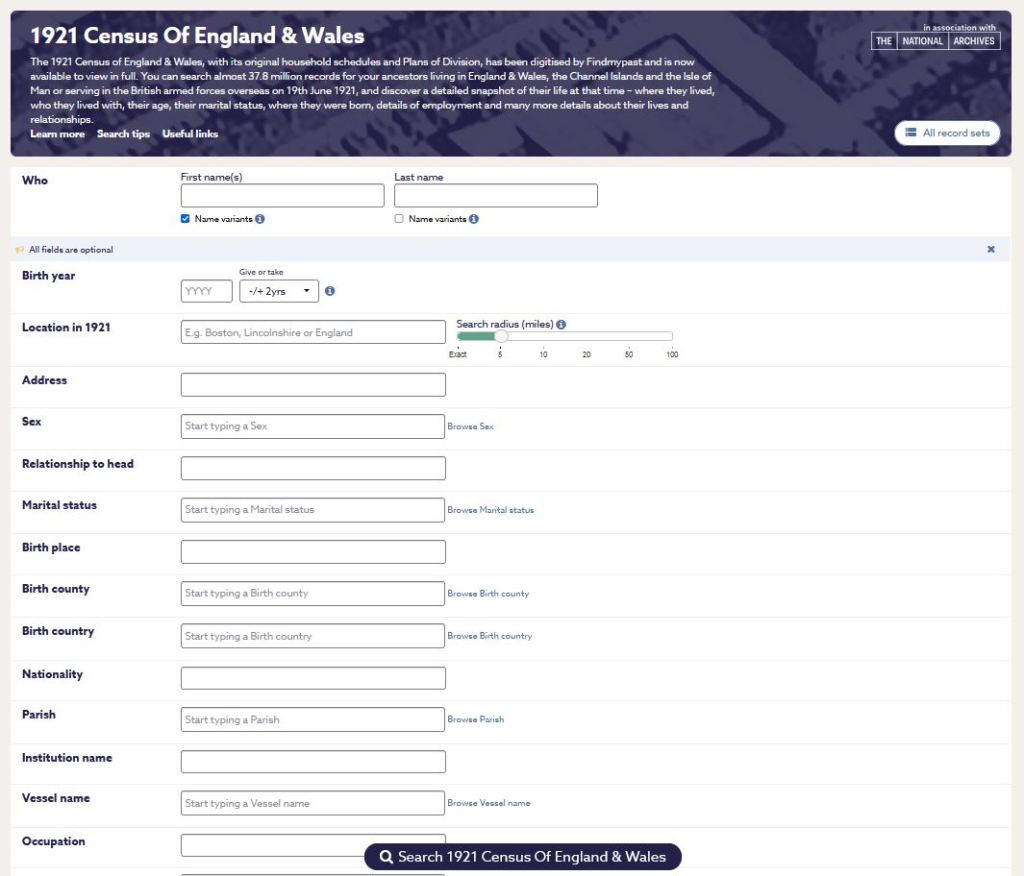

Findmypast offer two ways of accessing the 1921 census. After carrying out your search (you can search for ‘an ancestor’ or for ‘an address’) you’re presented with two options: you can either view the ‘Record transcript’ (for £2.50) or the ‘Record image’ (for £3.50).

Personally, I can’t see any point in paying £2.50 for the privilege of seeing what someone else thinks was written on a schedule when for just £1.00 more I can view the document itself and make my own mind up but we’ll leave that particular issue for another day. It’s the question of charging users to view what was inevitably going to be an imperfect transcript that got me thinking more generally about the whole process and practice of transcription: what is it and why do we do it?

I think it’s important to understand right from the start that transcribing a set of records and creating an index to a set of records are two very different disciplines with different ends in mind. So let’s look at the processes behind them and then we’ll come back and see how we can apply it all to the question of the quality of the transcription in the 1921 census.

Until fairly recent times, accessing original documents involved, by necessity, a visit to an archive or a library. The Latter Day Saints (in the shape of the Genealogical Society of Utah) led the way in the 1930s, by embarking on an extensive microfilming project. This allowed researchers for the first time to view original documents remotely (via their local LDS Family History Centre) or at least to view photographic images of them.[1]

There were, of course, other ways that researchers could access documents before the advent of microfilm, and for most of these we need to raise a glass of thanks to that Early Modern/Georgian/Victorian institution: the gentleman antiquarian. This isn’t the time or the place to consider the methods and behaviours of the antiquarians; they are an often-maligned group of men (and they were, I think I’m right in saying, exclusively male, middle-aged, well-to-do and white) but despite their sometimes questionable approach to ‘research’ and their occasionally selective approach to their work (their focus is undeniably on the records of those in the ‘upper echelons’ of society) it would be wrong to deny the crucial role they played in transcribing and translating thousands of medieval documents and thereby preserving them (or their contents, at least) for future generations of researchers.

The work of the antiquarians was published in County Histories and in the journals of Historical Societies: parish registers, deeds, charters, wills and inquisitions post mortem were all grist to the antiquarians’ mills and we are their undoubted beneficiaries.

Increasingly, as the centuries went by, the custom of providing indexes at the end of these works developed – often separate indexes for the ‘personal names’ (index nominum) and the ‘places’ (index locorum) mentioned in the book. Of course, now, thanks to websites such as Google Books and the Internet Archive, we can not only read them, but search them for our ancestors’ names or for references to the places that they lived and worked in – whether the originals were indexed or not – but the point here is that, in the original works, the transcription and the indexing were two quite separate processes. First comes the work itself, then the names and other details are picked out, sorted into alphabetical order and linked (usually through page references) back to the original entry.

In many ways, the works of the antiquarians, are surrogates of the originals, effectively – for research purposes at least – replacing the documents themselves. After all, why go to all the trouble of seeking out an original document when you can read the text in a published book?

The post-war boom in local and family history research gave rise to a new phenomenon: the practice of indexing key genealogical sources for the benefit of the growing numbers of active family historians. Records such as census returns, monumental inscriptions, poor law records and wills became the focus of projects carried out by local and family history societies and for many years towards the end of the 20th century these locally produced indexes became vital resources for researchers, whether in printed form or on microfiche.

The indexes were largely produced by volunteers; volunteers who usually had a degree of local expertise which they could use to help them to interpret some of the trickier text. The importance of local knowledge when it comes to this sort of work cannot be overestimated.

As a researcher, working in the 1980s and 1990s, I benefited enormously from the work carried out by the various family history societies. I bought copies of census indexes (I even had one of those new-fangled, hi-tech microfiche readers in my home office!) and I used them to search for families, knowing that finding a possible hit was only the start of the journey.

Because these census indexes were not designed to replace the documents but rather to lead researchers to the original returns. Indeed many of them were simply surname indexes, providing nothing but the surname and a reference to the page/folio where the relevant entry would be found. Some included additional details such as first names and ages but they were never intended to act as a substitute for the records themselves. They were a means of access and nothing more.

In 1988 a more ambitious project was launched by the Genealogical Society of Utah in partnership with the Federation of Family History Societies (now the Family History Federation) with the aim of transcribing the whole of the British 1881 census. I plan to write in more detail about this project in a future blog post so I’ll just say here that the transcription was done by volunteers, mainly people with local knowledge, that the whole project took four years to complete, and that the ‘output’ was, inititally at least, published on microfiche, followed by a set of CD-ROMs.

Then along came the internet … and everything changed …

As I’m sure I’ve mentioned before, I was lucky enough to be there at the start of the genealogical digital revolution. I was involved in the National Archives’ plans to digitise the collection of Prerogative Court of Canterbury wills: in fact, I was one of a handful of people in a meeting at which it was decided to recommend that the PCC wills should be chosen as the first record set to get the digital treatment.

I was also actively involved in the 1901 and 1911 census projects so I think it’s safe to say that I have a fair understanding of how digitisation projects work.

Since those early projects, things have moved on and a significant proportion of users of the vast online genealogical databases hosted by commercial organisations such as Ancestry and Findmypast have no experience of what went before.

The commercial companies have, over the years, increasingly adopted a position of transcribing virtually everything from the original record – in fact, this was the policy when it came to the ground-breaking online release of the 1901 census. Unlike the very rudimentary census indexes produced by family history societies in the late 20th century, the default position is now to capture everyone’s names, ages, relationships, marital status, occupations and birthplaces, not to mention all the information relating to the properties themselves. And you can see why you would want to do this: the more information you transcribe, the easier it should be to find the person you’re looking for. It makes a lot of sense, certainly from the users’ point of view, particularly (and this is a very important point) for those users whose primary interest in the documents is not family history but some other discipline such as local history, social history, house history or demography.

But then what happens is that the commercial companies, who have clearly invested a lot of time and money in having these essentially comprehensive transcriptions of the censuses carried out, lose sight of why they were producing the transcript in the first place – remember, it was all about helping the users to find their people! – and they see an opportunity to recoup some of that investment. Why not – you can imagine the executives suggesting in a boardroom meeting – why not charge people to view the transcript? Why not turn the transcript into a marketable item?

But as soon as you decide to charge people to view the results of your transcription, as soon as you put a value on the transcription as a separate product, you raise the expectations of the user that what they are going to get is necessarily an accurate, word-for-word copy of the original

Transcribing handwritten documents is a difficult task, particularly when, as is the case with our decennial census returns, the documents in question were written by thousands of different people, each, potentially, with their own idiosyncratic handwriting style. This is particularly true of the 1911 and 1921 censuses where the records we see are the schedules written by the householders themselves.

The idea that it might be possible to create a transcript which even approaches 100% accuracy, is a pipe dream. If you were to apply the necessary academic standards to the task (the use of genuine experts, including those with the necessary local knowledge, to carry out the transcription itself; rigorous supervision of the whole transcription process including double-keying throughout; access to relevant reference works; a team of suitably qualified editors to check everything and most importantly, lots of time) the cost would instantly make the entire project commercially unviable. And even then, it wouldn’t be anywhere near 100% accurate. It’s simply not possible to work out in every single case what a particular word or character was supposed to be.

When I worked for the Public Record Office (as it was then) on the 1901 census project, I was constantly told that an accurate transcription was deliverable – even if (as I recall) the target was 95% accuracy. But it wasn’t then, and it still isn’t now.

My personal experience is that because of the virtually comprehensive manner in which the data is captured it is almost always possible to find the person I’m looking for – however bad some of the transcription might be. The search functionality is so flexible that even if just one piece of data relating to an individual is transcribed accurately (perhaps their birthplace or occupation) that can be enough to allow you to identify them. And this is no different to countless other databases that we search every day.

https://search.findmypast.co.uk/search-world-records/1921-census-of-england-and-wales

So, as I see it, the problem with the 1921 census transcription is not that the work in this case isn’t up to scratch (that may or not be true) but that the commercial providers have raised the users’ expectations so far with the promise of what they call ‘a legible translation of the original record’ that when those expectations (inevitably!) are not met, the disappointment is naturally greater than it might be.

© David Annal, Lifelines Research, 22 May 2022

[1] Microfilm, Mormons and the Technology of the Archive, Hannah Little, eSharp Journal, University of Glasgow, Issue 12, Winter 2008. https://www.gla.ac.uk/research/az/esharp/esharp/12winter2008technologyandhumanity/

Well said David. Can I refer my readers to my monthly newsletter to your article please. Jackie Cotterill, Midland Ancestors.

LikeLike

Yes, that would be great, thank you!

LikeLike

David

Thank you for another interesting piece – as usual, I will include a link to it in my regular Web Watch article in my local FHS journal.

I am lucky having a base in London and a Freedom Pass. Kew is easy and free.

I have spent several days at Kew downloading both transcripts and images [front and back] of 1921 onto a laptop. Free.

I have searched in advance at home to find the key index fields to maximise time at Kew.

I then study them back home. The image is key, but the transcript is checked thoroughly before being pasted into my FH software, avoiding retyping the lot and adding my own typos. It would be included in a Gedcom shared with others, whereas the images have copyright implications.

But I agree that those downloading at home should just pay for the images.

Best wishes

David Wharton

LikeLike

Thanks David!

LikeLike

I especially like your point that it’s almost always possible to find the person we’re seeking because of the scope of the transcription. Good post and many thanks!

LikeLike

Thanks Marian!

LikeLike